Continuous Model Confidence

How We Evaluate, Monitor, and Maintain Model Performance Over Time

Our explainable AI suite spans the full MLOps lifecycle, combining data analysis, performance evaluation, and continuous monitoring. This enables teams to understand model behavior, validate performance against mission-relevant conditions, and track changes in data and environments to maintain trust over time.

01

We analyze training and testing data to assess composition, quality, and coverage, while establishing data and model lineage for explainability. This foundation enables testing with limited or low-quality datasets, further reducing data acquisition time and cost.

02

Evaluate Performance Across Real-World and Edge Conditions

Model performance is evaluated across mission-relevant scenarios using defense and academic metrics aligned with end-user evaluation frameworks, giving teams a clear, consistent understanding of how models will behave outside controlled environments.

03

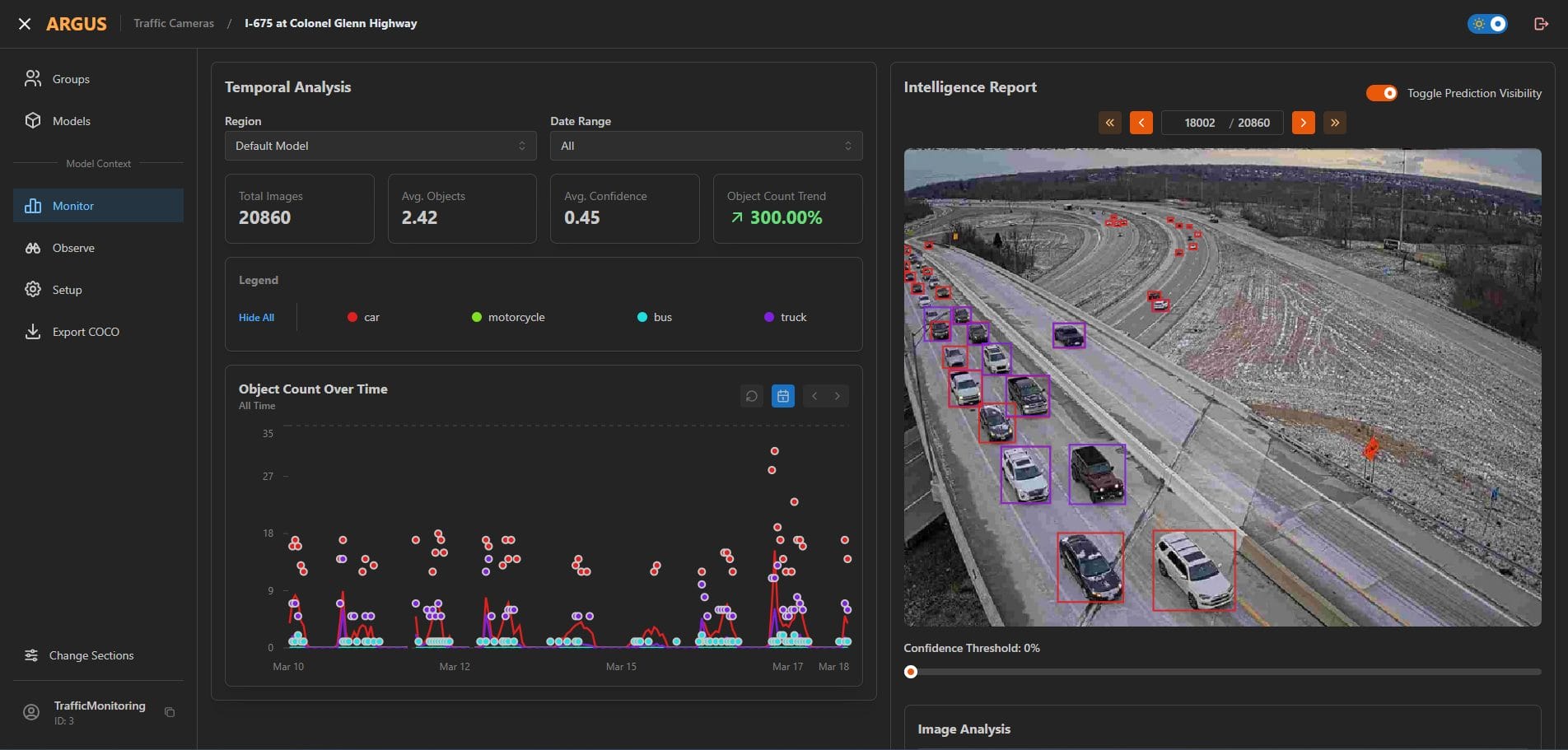

Detect Failures and Connect Model Performance to Real Data Conditions

When performance changes occur, we enable teams to trace those changes back to specific data conditions and environmental factors. By correlating model outcomes with real input characteristics, teams can quickly diagnose issues, quantify uncertainty, identify coverage gaps, and prioritize targeted improvements to maintain performance and readiness.

04

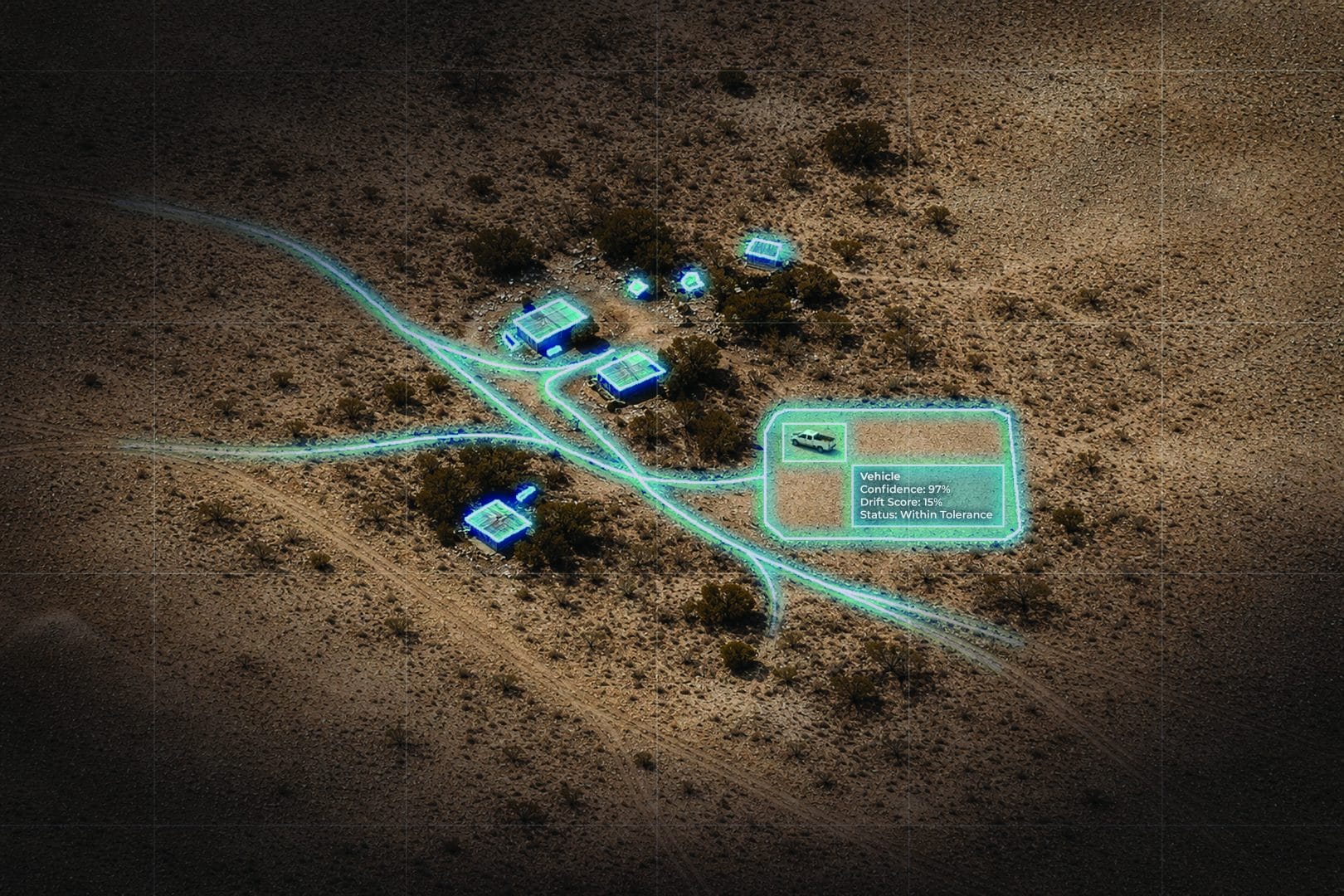

Monitor Model Behavior and Identify Data Shifts

Once deployed, models are continuously monitored by combining model features with input data to detect anomalies, distribution shifts, and performance degradation, giving teams visibility into behavior and alerting them to potential failures before they impact outcomes.

The Etegent Advantage

Explainable AI Benefits

Monitor model health, diagnose issues, validate performance, and detect failures early to ensure AI outputs remain reliable in mission-critical environments.

Understand and Diagnose Model Behavior

Validate and Deploy

Detect Failures

Ready To Understand, Monitor & Validate Model Behavior?

AI systems must remain reliable across the full lifecycle, from dataset design to deployment. Our explainable AI tools enable teams to design and analyze data, evaluate real-world performance, and continuously monitor behavior with immediate alerts when failures occur in deployment.